UI Design using Midjourney

Text-to-image AI tools like Midjourney, Dalle-2, and Stable Diffusion can generate images from plain text. These days the internet is full of AI-generated images, but the question is, “Can text-to-image tools can be used for UI design?”

In this article, we will see how AI tools can deal with regular UI tasks such as creating:

- UI screens

- App icons

- Product images

- Logos

- Mascots

To make our analysis more specific, we will generate UI assets for a particular product type (food delivery app).

Disclaimer: This article doesn’t try to convince you to use AI tools. It’s a pure experiment that shows what AI tools are capable of and what UI assets they can generate.

This article is also available in a video format:

A quick introduction to MidjourneyMidjourney is an online text-to-image tool. To use Midjourney, you need to join Discord. Visit https://www.midjourney.com/, click Join the Beta and follow the instructions to create your account.

Once you do that, you can join any channel with the prefix “newbies-” and start generating images by typing

/imagine [what you want to generate]

UI screens

The most important thing that we need to do when working with AI tools is to write clear prompts. A prompt is a text string that you submit to an AI tool so that it can generate an image for you. The prompt should clearly specify what you want to see.

The better you articulate your intention, the more relevant result you will get from AI.

When writing a prompt, think about how you will describe your design to an actual person and write down an exact sentence. Turn this sentence into tokens (short phrases separated by a comma).

The tokenized format is better for Midjourney because it helps you trim all unnecessary words while keeping essential contextual information.

For example, in the context of a food delivery app, the tokenized prompt might be:

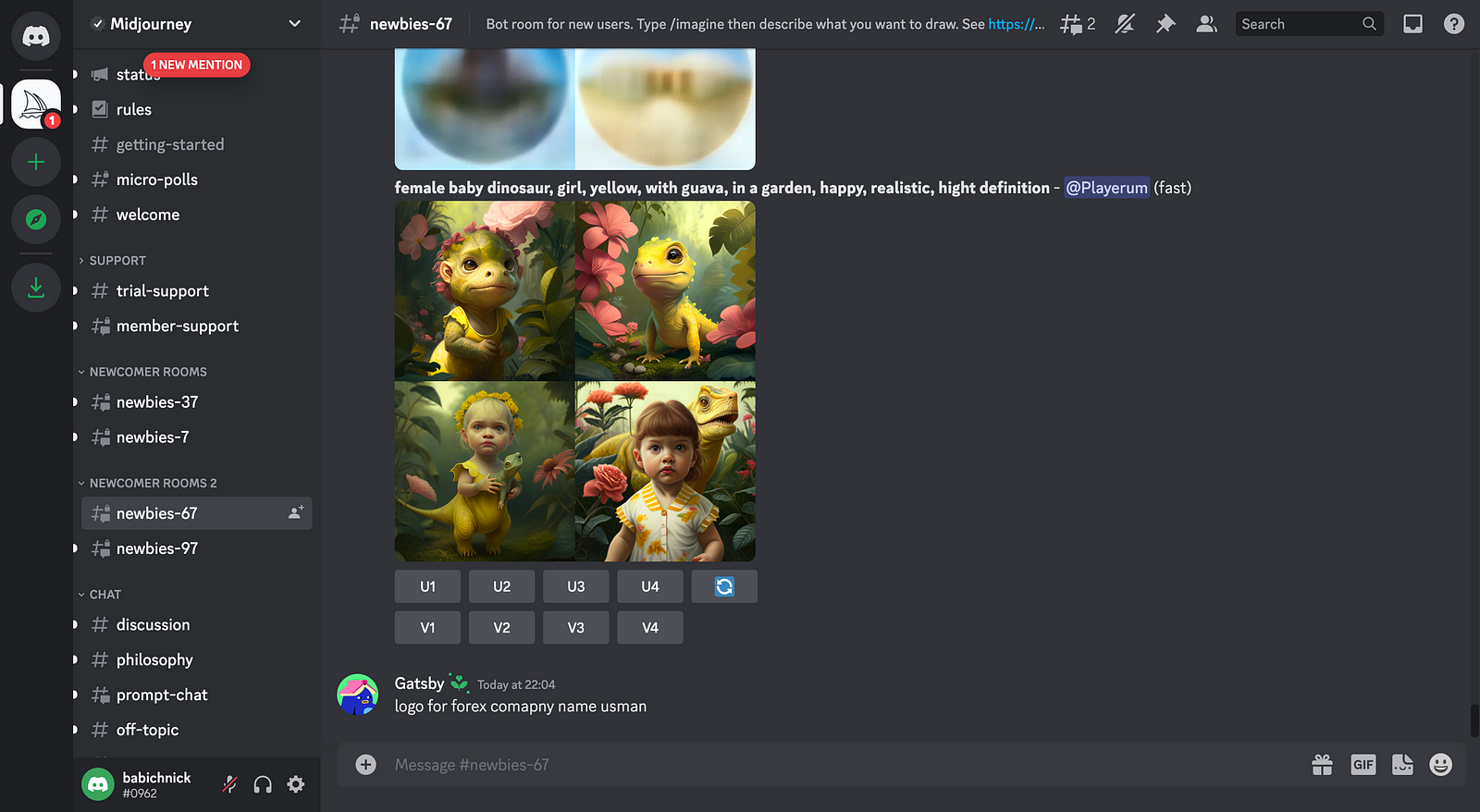

/imagine high-quality UI design, food delivery mobile app, trending

on Dribbble, Behance

By default, Midjourney generates four options for you to choose from. You can upscale options using the command U[number]. Let’s see what the upscaled version of the first design will look like.

If you compare the upscaled version to the previous version, you might notice that Midjourney doesn’t only increase the size and quality of the image but it also slightly modifies the layout. For example, the upscaled version doesn’t feature a red button in the top-left corner of the screen.

You likely notice two significant problems in the images generated by the tool: gibberish text and corrupted food preview images. Unfortunately, these problems are relevant not only for UI screens but for many kinds of images generated by Midjourney.

Our first take wasn’t perfect, so you might wonder how to modify our prompt to achieve better results. It’s worth saying that there is no optimal strategy for writing prompts. You need to experiment with different tokens to find an optimal solution. That’s why you should aim for at least a few tries before you come up with a decent output.

Let’s modify our prompt to see how the tool will react to it. This time we will mention “Figma” because we will likely want to use the assets in the design tool.

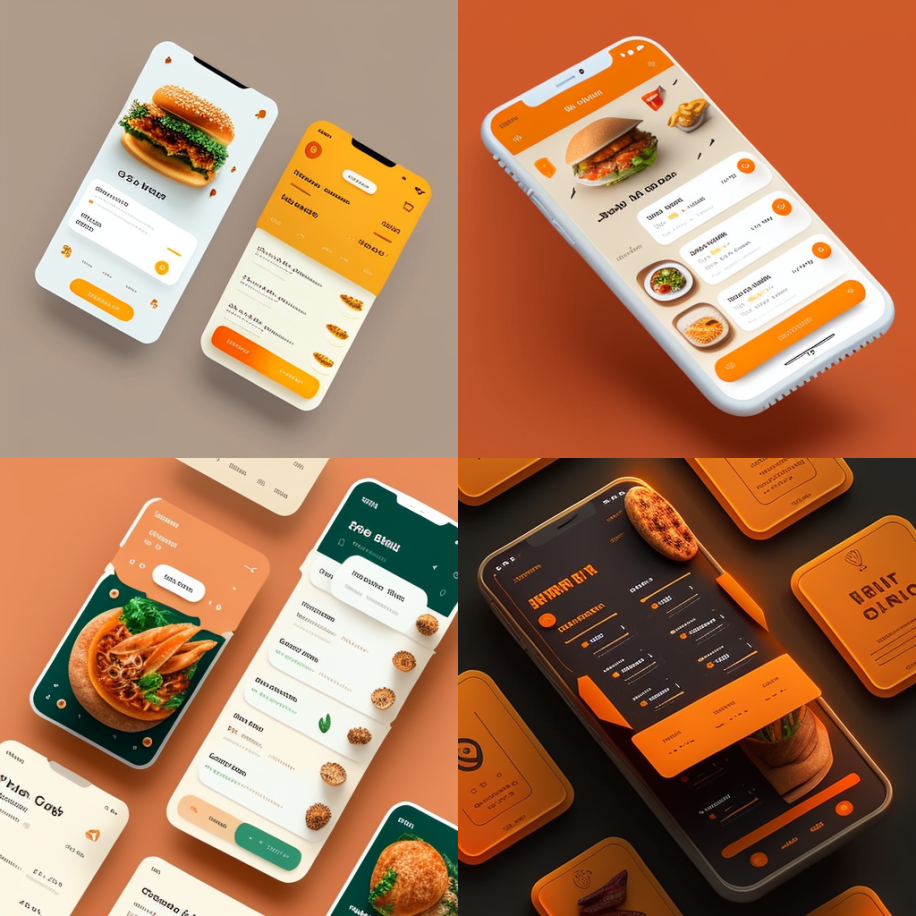

/imagine food delivery app, user interface, Figma, Behance, HQ, 4K, clean UI

Let’s see what the upscaled version of the first design will look like.

This version looks more like something we can use in design. The layout has a clear visual hierarchy of elements.

Let’s make another take on UI design using Midjourney. This time we will remove tokens “Dribbble” and “Behance,” leaving only “Figma” but also add a parameter “ — q 2” to increase the quality of the images.

/imagine [what you want to generate] -- q <number>

Here is our prompt

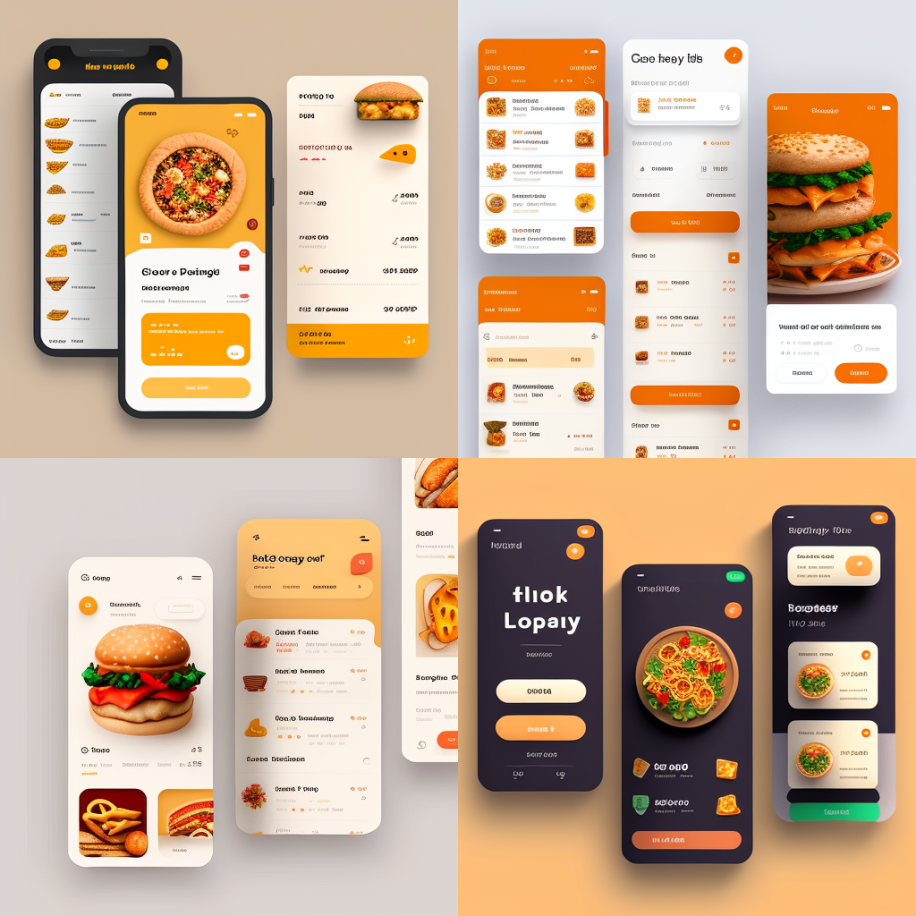

/imagine Food delivery app, mobile app, user interface, Figma, HQ, 4K, clean UI -- q 2

The third option looks promising. Let’s upscale it to see more details.

You can see that the results that Midjourney generates, even after the third try, cannot be used without refinement from a human designer. Nevertheless, this raw output can be very helpful during the early stages of the design process because it serve a visual inspiration. For example, it’s relatively easy to create a moodboard of AI-generated images and use it as a reference for visual designers to create a proper visual language.

It’s also vital to mention that we’ve used a very general prompt for UI design. If we want to see a particular screen (such as a restaurant search page), we should clearly specify it in a prompt as a token so that the system can get us a more relevant result.

Imagery

Imagery is photos and illustrations used in various parts of a product. Imagery is integral to user experience because it communicates essential information to users and sets the right mood. Finding the right imagery can be challenging during product design, so let’s see if Midjourney can help us with that.

App Icon

An app icon is a simple yet very powerful element because it communicates the idea of what the app is all about as well as creates a first impression to users. Even before users start to use a product, the icon is the first thing they see, and its level of craftsmanship directly impacts how users will perceive a product.

Since we are designing a mobile app, we need to clearly specify the token “iOS app” as well as mention that we want to see high-quality visual assets (tokens “high resolution” and “high quality”). And because our app is about food delivery, we might want to mention one particular type of food (“burger”).

/imagine icon for iOS app in high resolution, burger, high quality, HQ -- q 2

As you can see, Midjourney generated a set of ultra-realistic icons. Let’s upscale the second option.

The style that Midjourney uses for icons is great, but it might not work for an actual project because many designers prefer to use minimalist icons. Let’s give it a second try using a slightly different prompt. We need to mention tokens like “minimalism” and “flat design” to signal AI that we want to see simple icons.

/imagine icon for iOS app in high resolution, burger, high quality, minimalism, flat design -- q 2

Now the output looks like something we can use in a real project. Let’s upscale the first option.

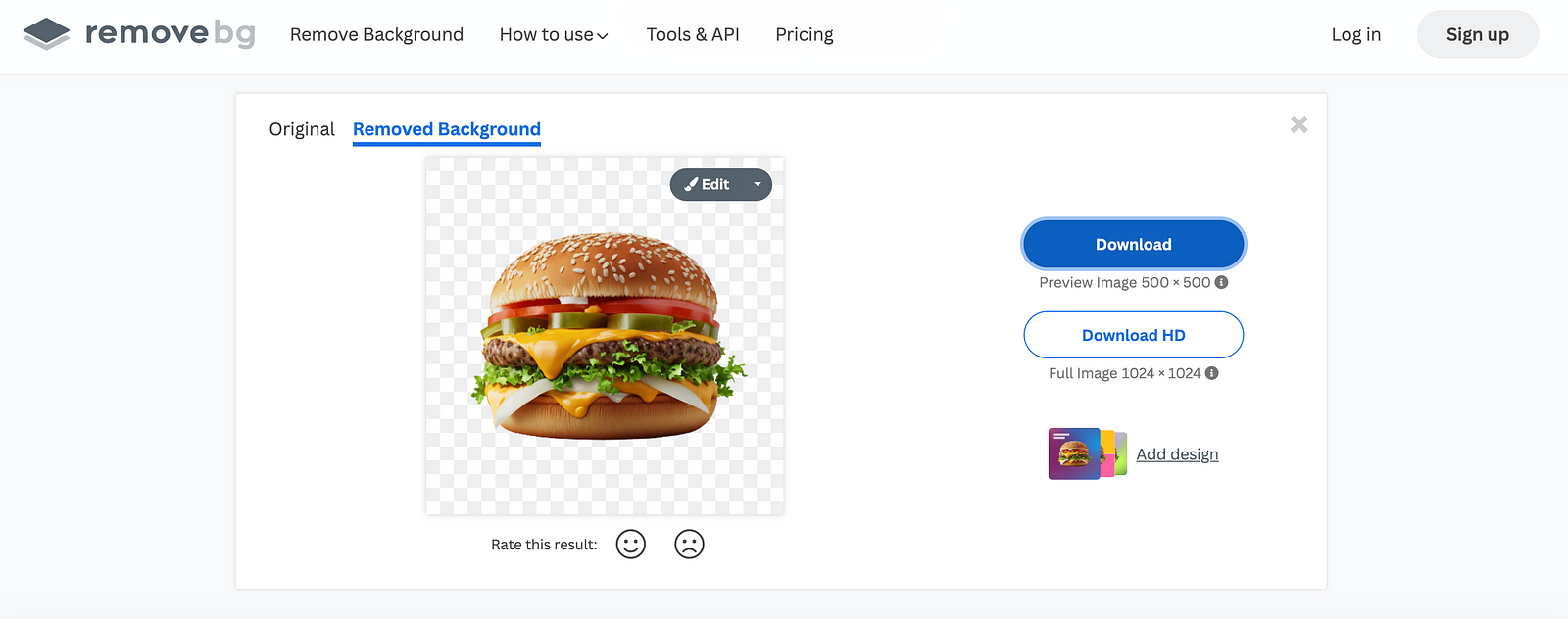

Product images

Product images are images that we will show on product pages. Since we design a food ordering app, our image will be photos or illustrations of food. Let’s ask Midjourney to generate images for us:

/imagine burger, high quality picture, high resolution, HQ, 4K -- q 2

Midjourney generated solid images, but they have one major drawback — they are placed on a dark background, and this can be a problem because it will require extra work to extract an object from the background. But we can use a simple trick — ask Midjourney to use a transparent or white background:

/imagine burger on a transparent background, high quality, high resolution, 4K -- q 2

The first image looks great; let’s upscale it.

Now, all we need to do is to remove the background from this image. To do that, we can use online services like Remove.bg. Upload the image to removeb.bg and download the ready-to-use visual asset.

Promo illustrations

Promo illustrations are versatile visual assets that can be used inside the app or on a promo website. Let’s see if Midjourney can create a decent illustration. We want to communicate the idea that our food is delicious. So the prompt we provide is:

/imagine happy man eating a burger, high resolution, high quality illustration

The cool thing is that the images are generated in different styles — from watercolor painting to highly realistic photo illustration. But it is evident that Midjourney had a hard time pairing happiness with eating. Let’s upscale the second image to see if the upscaled version will look better.

You likely notice another problem with the images that Midjourney generates — the problem with legs and fingers. The tool often adds an extra leg or finger when it generates images of people. Yet, in our example, the tool removed fingers and modified the human hand to make it look like a claw.

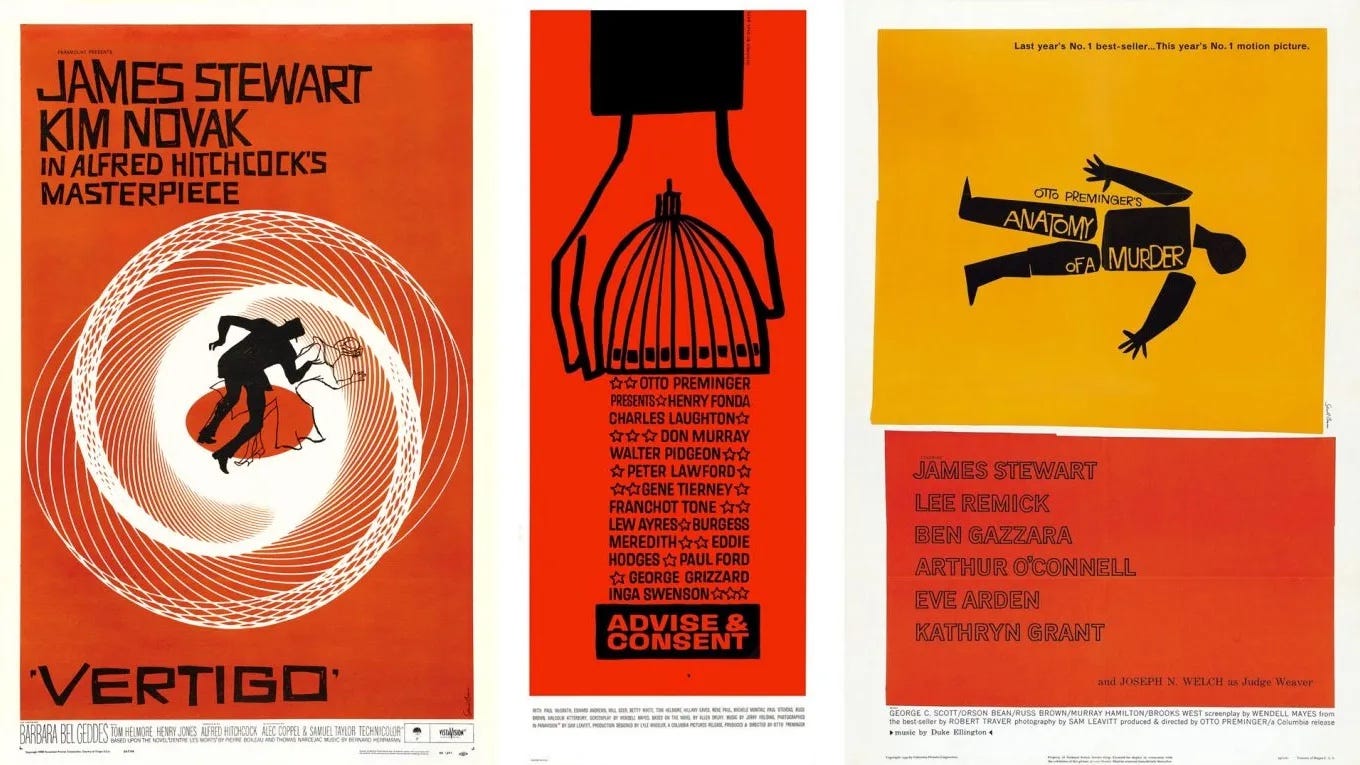

Logo

A logo is a core element of branding. When it comes to logo design, we have to ensure its meaning is clear to customers and the logo itself is memorable. The great thing about Midjourney is you can ask the tool to generate images in a particular style. When it comes to logo design, we can follow the style of famous graphic designers such as Saul Bass, who is known for designing posters for movies like Vertigo (directed by Alfred Hitchcock).

Here is what Midjourney creates for a prompt:

/imagine minimal logo of a burger, graphic style of Saul Bass

Midjourney indeed uses some elements of Saul Bass’s style, such as typefaces and color scheme, yet the final result doesn’t look like something from his works.

Mascot

A mascot is a graphical object used to represent a business. A well-crafted mascot helps people remember a brand and communicates a certain mood. Let’s ask Midjourney to generate an image of a mascot for a food delivery company mentioning a particular style (Japanese).

/imagine simple mascot for a food delivery company, Japanese style

Midjourney generated a few nice examples of mascots. But only one example can be used with minimal modification (fourth option).

Can Midjourney replace UI designers?

No. At least not right now. The outputs the tools generate are very rough and often require refinement from designers. Midjourney is also very dependent on the prompt (the quality of output it provides can depend drastically on the prompts you provide).

Does it mean this tool has no place in the designer’s toolkit? No. The tool can be beneficial during the early phases of a product design process, such as ideation and visual exploration. Midjourney can be especially useful for moodboarding. It can help a team to compare a lot of visual styles before they can commit to one particular style.